Loading component...

At a glance

By Beth Wallace

For the second season of his podcast Shell Game, journalist Evan Ratliff launched an unusual startup: he was the only human working alongside artificial intelligence (AI) colleagues. He conducted meetings, brainstormed with his AI co-founders and assigned other agents to roles such as head of HR and chief technology officer.

Ratliff quickly discovered the limitations of trying to make AI agents behave like “real” employees.

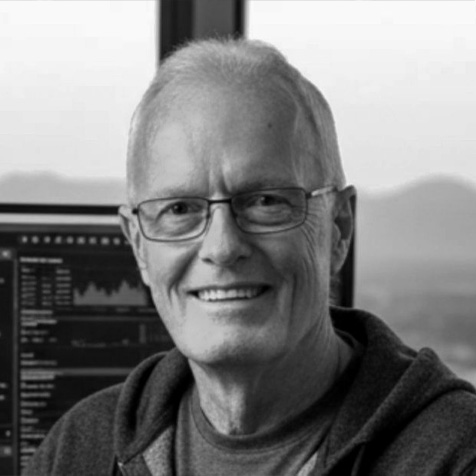

“He found that once he gave the agents the ability to use communication channels like internal messaging, they argued with each other, became disobedient and started questioning his leadership,” explains Peter Worn, joint managing director of wealth tech consultants Finura Group. “It is a great example of what could go wrong if we give AI models too much free rein.”

Ensure a healthy relationship with AI

Ratliff’s venture sits at the heart of the debate around treating AI agents like colleagues. As AI becomes more personalised, to what extent should these agents be treated like human workers?

According to David Tuffley, a senior lecturer at Griffith University’s school of information and communication technology, projecting too much humanity onto AI is a mistake as it can lead to unhealthy dependence.

“If people become dependent on the AI and stop thinking for themselves and taking responsibility for their lives, there is a risk they will become dependent on it,” he says.

This risk extends to organisations that rely too heavily on AI tools, Tuffley continues. “A healthy relationship is when the AI is seen as a super useful collaborator, like a silent partner that helps people with various jobs.”

Onboarding: Integrating AI into company culture

With many organisations still waiting to see the full benefits of AI in the workplace, some are adopting “onboarding” as a practical method for integrating AI agents into company culture and showing them the ropes.

Onboarding AI agents could follow many of the same principles used for human workers, Tuffley says. This includes educating them about organisational culture, behavioural expectations and operational processes.

“You are acquainting them with how things work in that organisation,” he says. “Part of that onboarding would be to give them as much information as possible.”

Another element of onboarding could be language training, such as teaching agents to adapt communications to suit different comprehension levels, languages or cultural groups.

"A healthy relationship is when the AI is seen as a super useful collaborator, like a silent partner that helps people with various jobs."

Language training must be managed carefully because there is always a possibility that an AI agent could offend a client, Worn says. “Or if the client becomes aggressive with the agent, it could stand up for itself — you just don’t know.”

He believes the most effective way to onboard AI agents is to treat them like “the smartest, most disobedient employee you’ve got”.

“A lot of the training of AI agents is as much about telling them what not to do as what to do, which is probably a little different to how you would treat a human employee.”

Managing AI agents in your organisation

While Ratliff gave his AI colleagues prominent positions on his company’s organisational chart, Worn and Tuffley agree that this is not necessary for most businesses at this stage. In fact, assigning an agent a broad title can be counterproductive.

The most successful AI agents, Worn says, have deployed focus on narrow use cases, with only a few steps and a clearly defined task “rather than trying to build an AI agent that does everything, which is often where things go wrong”.

"A lot of the training of AI agents is as much about telling them what not to do as what to do, which is probably a little different to how you would treat a human employee."

Meanwhile, as agentic AI becomes mainstream with multiple models performing specific jobs more or less autonomously within a workflow, Tuffley expects performance reviews to become standard practice. In 2025, McKinsey reviewed more than 50 of its agentic AI builds and recommended continual feedback to support improvement.

However, Worn advises organisations to avoid hasty judgements when assessing AI tools. He argues that the “human in the loop”, who is responsible for checking agents are performing as intended, must understand how AI systems operate, with inexperienced users tending to lose trust in the technology after a single error or hallucination.

“That has been a big learning for us — to not throw AI agents on teams who haven’t been brought on the journey to understand how the AI is supposed to work,” he says.

The ethical issues of AI employees

There are ethical implications to consider when introducing AI colleagues into the workplace.

Tuffley emphasises the importance of avoiding “black box AI” that lacks transparency and encourages organisations to understand how tools make decisions so they can adjust models when needed.

Worn adds that professional services firms must also consider their disclosure obligations around AI use. “If there is AI being used at any step of the process to do a client’s work, are you ethically obliged to disclose that proactively to clients?”

He flags that some clients may reject AI use entirely, which poses challenges for AI-first businesses that may be forced to end those relationships.

With 82 per cent of global business leaders expecting to use AI agents to meet the demand for more workforce capacity in the next 12–18 months, AI-resistant clients may find fewer AI-free options available.

For organisations, a key consideration will be how to introduce AI in the workplace responsibly while maintaining a clear distinction between humans and digital colleagues where, as Tuffley notes, “the human stays in control always, and the AI is there as an assistant, not the boss”.